Anthro-Complexity and Cyber Security

Anthro-Complexity and Cyber Security

I’ve been meaning to write about Anthro-complexity and Cynefin framework and making a quick introduction to what it is, how it works and it isn’t.

For that, I’ll position Anthro-complexity first and in future blog posts discuss Cynefin as opposed to focusing on the visualisation that most people come into contact with.

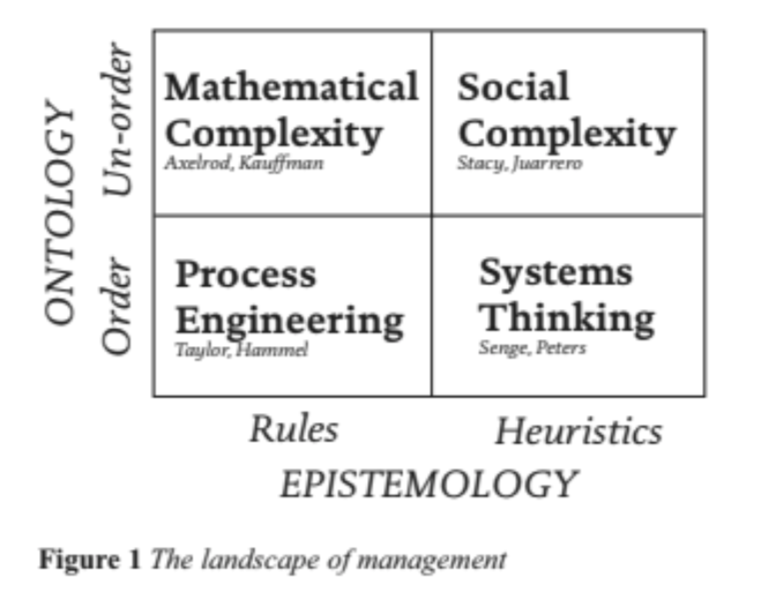

Peter Stanbridge and Dave Snowden, when writing an article for European Union on Innovation, developed the model above, which is an input-output model.

This is what we typically expect from Scientific management, in that there’s a high level of constraints which produce predictable results. This doesn’t specifically relate to manual tasks, as a computer may be required for the volume of computations. All is defined, structured and we know what to do. From a cyber security perspective, ‘Alerting’ would be something I’d put in this category, as we defined a simple input and how it relates to a simple output we trigger or perform.

To some of the “purist” System Thinkers out there, this is where you may disagree, but Dave Snowden argues that many of Systems Thinking experts start to “intuit complexity but not get it” but that the science still didn’t exist at that time most of the literature was written. The underlying metaphor is that it assumes that one can design systems, so having a Complex input but the way they handle it is by defining the looked-for outputs, and create the vision, mission statements and outcome based measures to close the gap. The perversity of this approach at scale, is that it leads to over-measurement of outcomes. Measures being built on top of measures in vain attempts to control a system which is inherently uncontrollable and complex. This is still, even within Cynefin, appropriate in the Liminal transition between Complicated and Complex domains, but when we get lots of agent iterations it becomes less useful. From a cyber security perspective, Systems thinking is immensely popular and I personally believe still has lots of good things to do for us, but there’s more to it. Good security designers, typically follow a Systems-approach (or systemic approach) at breaking down complexity into smaller parts and analyse each individual subsystem in its relations with peers, subsystems and the wider system, so we improve our chances of considering failures and impacts across different parts of the system.

Then we get Computational Complexity. Which is mostly about simulation, which is valuable, but it assumes that one can work out the rules and the situation is responded to consistently. In Cyber Security, most of the work being developed under the AI banner, would fall under this category in that models are developed and trained, with a promise that will then be able to make “good predictions” to assist decision making.

And we finally get to Anthro-complexity, which Dave Snowden funnily argues that it’s based on a simple proposition “Human being aren’t ants”.

As Dave puts it, we have “Intelligence, awareness of the situation, switch fluidly between identities (not single agents). It aims to bring together anthropology, cognitive neuroscience and complexity science into a new discipline that can learn from traditional Complex Adaptive systems theory but isn’t bound by it”.

In Cyber Security, in particular, we “feel” anthro-complexity in our veins on a daily basis, but without a framework to discuss it gets difficult to iterate and improve. 2 great examples would be Security Awareness and Policy Compliance. We write all the things, try to make them easier and more palatable, and people still don’t follow them and we keep discussing about incentives and consequences without often realising we’re “gap thinking” and unlikely to get the result we want.

It’s in the iterations of daily work with processes, systems and human ecologies that activities happen, and without having a better grasp of the natural dispositions (do they see it as a general hindrance, nice to have or something which directly supports their identity and objectives?), taking advantage of those dispositions to bring about change by experimenting coherent approaches and focus on vector measurements (within reach/comprehension from current state) is a better way to deal with Complex input and Complex output.

Another interesting aspect to this matrix, is that both Computational Complexity and Systems Thinking take a modelling approach and Scientific Management and Antho-complexity take a Framework approach. This implies that what matters is to look at things from different perspectives and respect human judgment, this being translated as humans being front and center.

The key take-away is that all of the above have value, within limits and context. So the key is to understand its limitations, what we aim to achieve and what’s the best tool for the job at hand.

Take-aways

At the center of of looking at things from different perspectives and real world application of it, I’m a big fan of the work from John Allspaw in Resilience Engineering (https://devopsdays.org/events/2019-washington-dc/program/john-allspaw/) which heavily references taking feedback from the users of the system and effective post-mortems as a psychologically safe environment for building robustness in systems and also Jabe Bloom work on DevOps and Sociotechnicity (https://www.youtube.com/watch?v=WtfncGAeXWU) which is a brilliant piece on stakeholders interactions, and how different time span concerns over the system needs to be considered for effective collaboration.

For Cyber Security, it’s no longer enough to just stay within our narrow scope of expertise, or even extend it to understand only business or market risk. If we’ll be helping shape the next generation of consumer and professional use of security systems, improve secure behaviours in our organisations, we need to be better equipped on how to practically apply understanding of Social Sciences and Complex Adaptive Systems if we’re to have a chance at getting continuously better results. Anthro-Complexity (as opposed to other types of frameworks) does not ditch what came before it, it just helps make sense of the type of system in front of us, and ensure we apply the right frameworks and techniques to address the problem in its current context towards better stories about why, what, how, where and when people do things and who does them.